One of the keys to success in Google Adwords (and other pay per click services) is to write good ad copy. This isn’t easy as the ads have a very restrictive format, reminiscent of a haiku:

One of the keys to success in Google Adwords (and other pay per click services) is to write good ad copy. This isn’t easy as the ads have a very restrictive format, reminiscent of a haiku:

- 25 character title

- 2×35 character description lines

- 35 character display url

Whats more, there are all sorts of rules about punctuation, capitalisation, trademarks etc. You will soon find out about these when you write ads. Most transgressions are flagged immediately by Google algorithms, others are picked up within a few days by Google staff (what a fun job that must be).

Google determines the order in which ads appear in their results using a secret algorithm based on how much you bid, how frequently people click your ads and possibly other factors, such as how long people spend on your site after clicking. Nobody really knows apart from Google, and they aren’t saying. The higher your click frequency, generally the higher your ad will appear. The higher your ad appears in the results, generally the more clicks you will get. So writing relevant ads is very important. This means that each adgroup should have a tightly clustered group of keywords and the ads should be closely targeted to those keywords.

There is no point paying for clicks from people who aren’t interested in your product, so you need to clearly describe what you are offering in the few words available. For example you might want to have a title “anti-virus software” instead of “anti-virus products” to ensure you aren’t paying for useless clicks from people looking for anti-viral drugs (setting “drugs” as a negative keyword would also help here).

I have separate campaigns for separate geographic areas. Each campaign contains the same keywords in the same adgroups, but with potentially different bid prices and ads. This allows me to customise the bid prices and ads for the different geographic areas. For example I can quote the £ price in a UK ad and the $ price in a US ad. Having separate campaigns for separate geographic areas is a hassle, but it is manageable, especially using Google Adwords editor.

Writing landing pages specific to each adgroup can also help to increase your conversion rate. It is worth noting that the ad destination url doesn’t have to match the display url. For example you could have a destination url of “http://www.myapp.com/html/landingpage1.html?ad_id=123” and a display url of “www.myapp.com/freetrial”.

Obviously what makes for good ad copy varies hugely with your market. Here are some things to try:

- a call to action (e.g. “try it now!”)

- adding/removing the price

- different capitalisation and punctuation

- keyword insertion (much beloved of EBay)

- changing the destination url

But, as always, the only way to find out what really works is testing. Google have made this pretty easy with support for conversion tracking and detailed reporting. I run at least 2 ads in each adgroup and usually more. Over time I continually kill-off under-performing ads and try new ones. Often the new ads will be created by slight variations on successful ads (e.g. changing punctuation or a word) or splicing two successful ads together (e.g. the title from one and the body from another). This evolutionary approach (familiar to anyone that has written a genetic algorithm) gradually increases the ‘fitness’ of the ads. But you need to decide how to measure this fitness. Often it is obvious that one ad is performing better than another. But sometimes it can be harder to make a judgment. If you have an ad with a 5% click-through rate (CTR) and 0.5% conversion rate is this better than an ad with a 1% click-through rate and a 2% conversion rate? One might think so ( 5*0.5 > 1*2 ) but this is not necessarily the case. I think the key measure of how good an ad is comes from how much it earns you for each impression your keywords get.

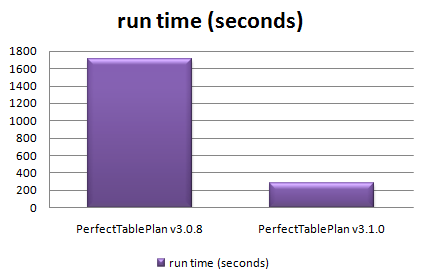

I measure the fitness by a simple metric ‘profit per thousand impressions’ (PPKI) where, for a given time period:

PPKI = ( ( V * N ) – C ) / ( I / 1000 )

V = value of a conversion (e.g. product sale price)

N = number of conversions (e.g. product sales) from the ad

C = total cost of clicks for the ad

I = impressions for the ad

Say your product sells for $30. Over a given period you have 2 ads in the same adgroup that each get 40k impressions and clicks cost an average of $0.10 per click.

- ad1 has a CTR of 5%, a conversion rate of 0.5% and gets 10 conversions, which gives PPKI=$2.5 per thousand impressions

- ad2 has a CTR of 1%, a conversion ratio of 2% and gets 8 conversions, which gives PPKI=$5 per thousand impressions

So ad2 made, on average, twice the profit per impression despite the lower number of conversions. Given this data I would replace ad1 with a new ad. Either a completely new ad or a variant of ad2.

PPKI has the advantage of being quantitative and simple to calculate. You can just export your Google Adwords ‘Ad Performance’ reports to Excel and add a PPKI column. Some points to bear in mind:

- Selling software isn’t free. You may want to subtract the cost of support, CD printing & postage, ecommerce fees, VAT etc from the sale price to give a more accurate figure for the conversion value.

- PPKI doesn’t take account of the mysterious subtleties of Google’s ‘quality score’. For example an ad with low CTR and high conversion rate might conceivably have a good PPKI but a poor quality score. This could result in further decreases in CTR over time (as the average position of the ad drops) and rises in minimum bid prices for keywords.

- PPKI is a simple metric I have dreamt up, I have no idea if anyone else uses it. But I believe it is a better metric than cost per conversion, or any of the other standard Google metrics.

To ensure that all your ads get shown evenly select ‘Rotate: Show ads more evenly’ in your Adwords campaign settings. If you leave it at the default ‘ Optimize: Show better-performing ads more often’ Google will choose which ads show most often. Given a choice between showing the ads that make you most money and the ads which make Google most money, which do you think Google will choose?

Text ads aren’t the only type of ads. Google also offer other types of ads, including image and video ads. I experimented with image ads a few years ago, but they got very few impressions and didn’t seem worth the effort at the time. I may experiment with video ads in the future.

The effectiveness of ads can vary greatly. Back in mid-December I challenged some Business Of Software forum regulars to ‘pimp my adwords’ with a friendly competition to see who could write the best new ads for my own Google Adwords marketing. The intention was to inject some fresh ‘genes’ into my ad population while providing the participants with some useful feedback on what worked best. Although it is early days, the results have already proved interesting (click the image for an enlargement):

The graph above shows the CTR v conversion ratio of 2 adgroups, each running in both USA and UK campaigns. Each blue point is an ad. The ads, keywords and bid prices for each ad group are very similar in each country (any prices in the ads reflect the local currency for the campaign). Points to note:

- There were enough clicks for the CTR to be statistically significant, but not for the conversion rate (yet).

- The CTRs vary considerably within the same campaign+adgroup. Often by factor of more than 3.

- Adgroup 1 performs much better in the USA than in the UK. The opposite is true for adgroup 2.

- Adgroup 1 for the USA shows an inverse correlation between CTR and conversion rate. I often find this is the case – more specific ads mean lower CTR but higher conversion rates and higher profits.

‘Pimp my adwords’ will continue for a few more months before I declare a winner. I will be reporting back on the results in more detail and announcing the winner in a future post. Stay tuned.

I first became interested in programming in about 1978, at the age of 12. I can recall the exact moment. I was in a classroom at The Royal Hospital School watching a very basic demo (written in BASIC) of a ball bouncing around a screen on an

I first became interested in programming in about 1978, at the age of 12. I can recall the exact moment. I was in a classroom at The Royal Hospital School watching a very basic demo (written in BASIC) of a ball bouncing around a screen on an  My

My

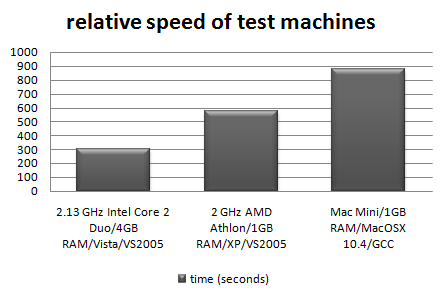

I need to support both MacOSX 10.4 (Tiger) and 10.5 (Leopard) for the latest release of

I need to support both MacOSX 10.4 (Tiger) and 10.5 (Leopard) for the latest release of  When I first released

When I first released

I have just had my first charge-back through GoogleCheckout. I shouldn’t moan at one charge-back in 8 months use as my secondary payment processor – except:

I have just had my first charge-back through GoogleCheckout. I shouldn’t moan at one charge-back in 8 months use as my secondary payment processor – except: Some years back my wife bought a PC and got a ‘free’ inkjet printer with it. It was a really lousy printer, but hey, it was free. When it ran out of ink we tried to get a new inkjet cartridge, but the cheapest set of cartridges we could find was £80. That was 4 times the price of other comparable cartridges at the time. Some further research showed that you could buy the printer for £20 – with cartridges! Their ugly sales tactics didn’t work. We threw it in the dustbin and bought an Epson inkjet, which gave years of sterling service using third party sets of cartridges costing less than £10.

Some years back my wife bought a PC and got a ‘free’ inkjet printer with it. It was a really lousy printer, but hey, it was free. When it ran out of ink we tried to get a new inkjet cartridge, but the cheapest set of cartridges we could find was £80. That was 4 times the price of other comparable cartridges at the time. Some further research showed that you could buy the printer for £20 – with cartridges! Their ugly sales tactics didn’t work. We threw it in the dustbin and bought an Epson inkjet, which gave years of sterling service using third party sets of cartridges costing less than £10.